rss

LakeDrops Drupal Consulting, Development and Hosting: Test, Replay, Debug: Closing the Feedback Loop

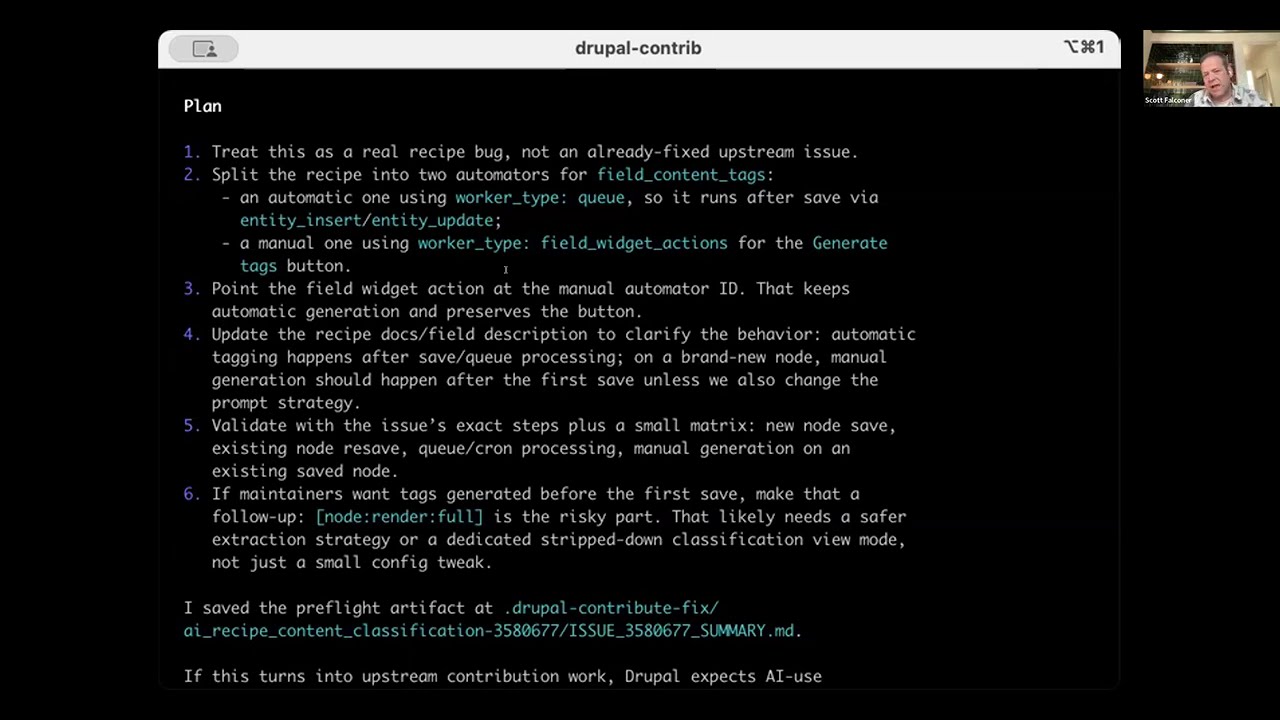

Building workflows blind - configure, deploy, hope, check logs - was the reality for years. ECA's integrated test, replay, and debug features close the feedback loop. Put the modeler in listening mode, trigger events, see execution results immediately with token values at each step. A small widget appears on any page where ECA processed events - click it, modeler opens in overlay with recorded execution data, replay what just happened right there in context. Recording is expensive (despite 70% CPU and 85% storage optimizations), so use temporarily when debugging. Production event replay lets you step through failures with actual data from when they occurred. Conditional recording triggers and JSON export across environments are coming. No other workflow tool in any CMS - not WordPress, Joomla, n8n, or Zapier - offers step-through replay with production recordings at this level. This is what existing ECA users requested most: visibility into workflow execution. Infrastructure-level work that required sustained investment but compounds over years. Workflow Modeler exclusive feature, not available in BPMN.iO.